Summarize this article using AI

In 2025, global enterprises spent $684 billion on AI initiatives. By year end, more than $547 billion of that investment had failed to deliver its intended value. The models worked. The demos were impressive. The procurement process was long and expensive. And then the software hit the real world and things stopped working as expected.

This is not a story about bad AI. The technology is genuinely capable. The problem is something more specific: getting AI to actually work inside a real enterprise environment is a fundamentally different engineering challenge from building AI that works in a lab or demo setting. And most organizations are not set up to solve that challenge.

This article breaks down why AI projects fail at such high rates, what the execution gap actually looks like in practice, and why a growing category of engineers called Forward Deployed Engineers exists specifically to close it.

AI Project Failure Rate: How Bad Is the Problem?

The numbers are consistent across every major research organization studying enterprise AI adoption.

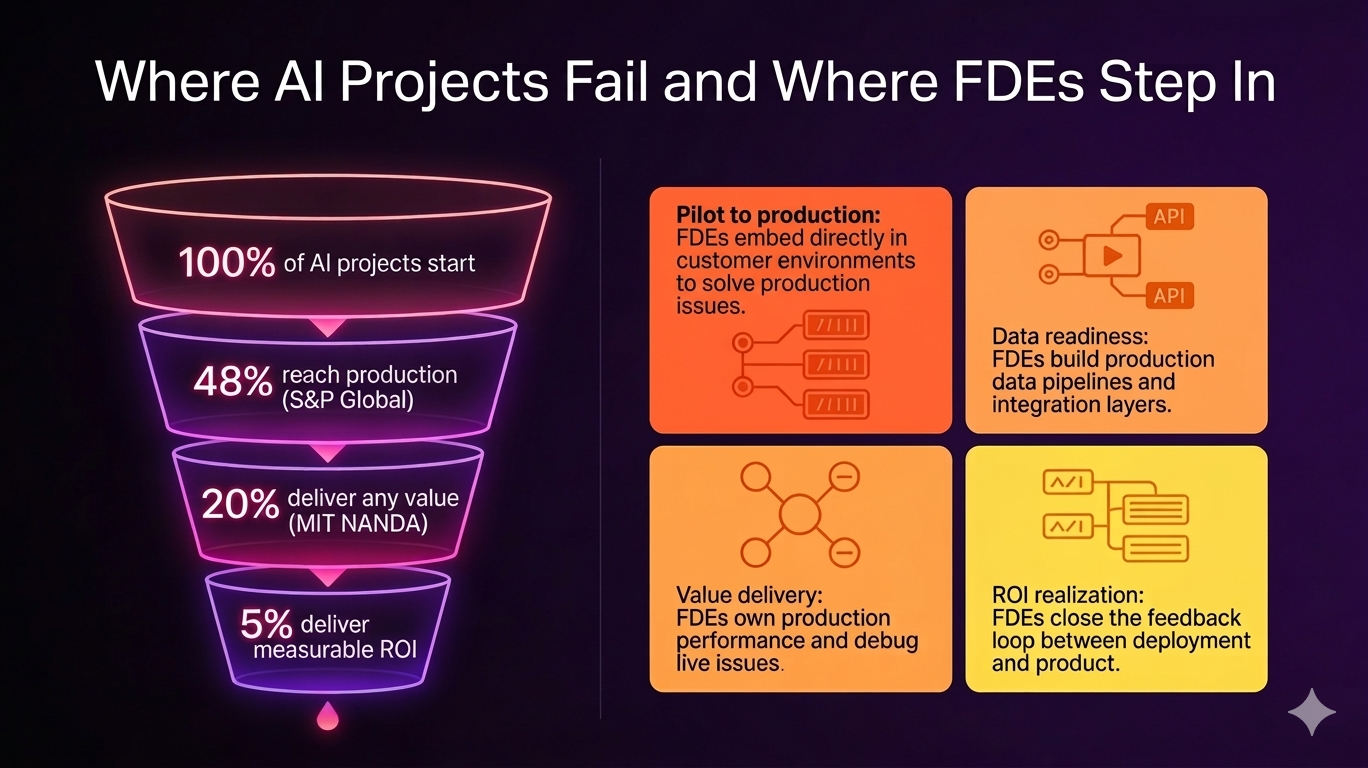

MIT's NANDA initiative, which studied over 300 AI deployments through structured surveys and practitioner interviews, found that 95% of generative AI pilots fail to deliver measurable profit and loss impact. Not 30%. Not 50%. Ninety-five percent.

The RAND Corporation puts the overall AI project failure rate at 80.3%, roughly twice the failure rate of non-AI IT projects. McKinsey's 2026 Global AI Survey found that 73% of deployments fail to achieve their projected return on investment. Gartner predicts that 60% of AI projects unsupported by AI-ready data will be abandoned through 2026.

Deloitte found that 42% of companies abandoned at least one AI initiative in 2025, up from 17% the prior year. The average sunk cost per abandoned project was $4.2 million.

"The hype on LinkedIn says everything has changed. Nothing fundamental has shifted." - COO at a large enterprise organization, quoted in MIT Project NANDA research, July 2025

These are not marginal studies. They represent the most authoritative voices in enterprise technology, and they agree on the same conclusion: most enterprise AI investments are not delivering their intended value.

Why AI Projects Actually Fail

The instinct is to blame the technology. Bad models, insufficient compute, wrong tool selection. But that is rarely the real cause.

Research from RAND found that 84% of AI failures are leadership or organizational in nature. 73% of projects were approved without clear pre-defined success metrics. 68% underinvested in foundational data work. 56% lost executive sponsorship within six months of launch.

But beneath the leadership failures, there is a technical execution layer that is consistently underestimated. And it is this layer where the most preventable failures happen.

The pilot-to-production gap

S&P Global Market Intelligence found that only 48% of AI projects make it into production at all. The rest fail at or before the pilot stage. Of those that do reach production, most still fail to generate meaningful ROI.

The reason is straightforward. A pilot environment is controlled. The data is clean, the infrastructure is predictable, and the team running the experiment understands the system they built. A production enterprise environment is none of those things. Legacy systems built over decades sit alongside modern cloud infrastructure. Data exists in fragmented, inconsistent formats across dozens of tools. Workflows that were designed for humans need to be rebuilt to accommodate AI. The AI system that performed well in the demo starts behaving differently when it meets real operational data.

Data readiness

Gartner's definition of AI-ready data is precise: data aligned to specific use cases, actively governed at the asset level, supported by automated pipelines with quality gates, and continuously quality-assured. The word continuously is where most organizations fall short.

Traditional data management runs at quarterly or annual cadences. AI models in production need data quality signals measured in hours. That mismatch is where most data-related failures originate. Gartner estimates 60% of AI projects will be abandoned through 2026 specifically because organizations do not have data management practices mature enough to support production AI.

Integration failure

AI models built in isolation rarely deliver value. They need to connect to CRM systems, ERP platforms, internal APIs, operational dashboards, and data pipelines that were often built years or decades before AI was a consideration. Building and maintaining this integration layer is harder than most organizations anticipate, and it almost always requires engineering-level involvement that goes beyond what a consulting engagement or a product implementation team typically provides.

No ownership of deployment outcomes

This is the failure mode that gets the least attention. Traditional software engineering teams build the model and hand it off. Consultants advise and exit. Nobody owns what happens in production after the handoff. When the AI agent starts providing wrong answers, or the model performance degrades over time, or an integration breaks after a third-party system update, there is no clear owner of the fix.

This accountability gap is where a significant amount of AI value gets permanently lost.

What AI Failure Looks Like in Practice

Salesforce documented a clear example of this. A reservation booking platform had deployed its first AI agent using the Agentforce platform. The agent was designed to answer questions from enterprise customers using the service. In practice, it was failing. The agent could not retrieve accurate answers because something was off with the data library. Updates to knowledge articles were not syncing correctly with Data 360. The platform's internal team could not resolve it.

This is a typical production AI failure. The model was not the problem. The deployment, the data sync, and the integration between systems was the problem. Salesforce deployed a Forward Deployed Engineer team. They diagnosed the data sync issue, worked with Salesforce's product team to fix the underlying glitches, and had the agent working within a week.

The technical complexity of that fix was significant. But it was not the kind of complexity that shows up in a demo. It only appeared when the system met a real enterprise environment with real data and real operational constraints.

How Forward Deployed Engineers Fix AI Deployment Failures

The Forward Deployed Engineer role exists specifically to solve this execution layer problem. FDEs are engineers who work directly inside customer environments, own deployment outcomes end to end, and build the integration and infrastructure that makes AI actually function in production.

Unlike traditional consultants who advise and exit, or product engineers who build features for a general user base, FDEs take ownership of whether the AI works in a specific customer's real environment. That accountability distinction is what makes the role structurally different from anything that existed before.

In practice, what FDEs do in the context of AI deployment failure looks like this:

- They work inside the customer environment from day one. They see the actual infrastructure, the real data quality issues, the legacy system constraints, and the workflow realities that no discovery call ever surfaces.

- They build the integration layer that most AI projects skip or underestimate. Connecting AI models to CRM platforms, ERP systems, internal APIs, and data pipelines in ways that are stable and maintainable in production.

- They own production performance, not just technical delivery. When the model starts drifting or a data sync breaks, the FDE is accountable for diagnosing and fixing it. There is no handoff to blame.

- They feed real deployment learnings back to the product team. FDEs function as the highest-fidelity product signal in the company, turning frontline chaos into product improvements at a pace that remote engineering teams cannot match.

If you want to understand the full technical depth of what FDEs build inside these environments, the Do FDEs Code blog covers this in detail. The short answer is yes, significantly, and in production.

Real Results When FDEs Are Involved

The outcomes when FDE teams are involved in AI deployment are meaningfully different from standard implementation approaches.

K1x needed to deploy a conversational AI agent inside a complex, regulated product environment. Maven's Forward Deployed Engineers embedded directly with the team, integrated the platform, synchronized hundreds of help center articles, and deployed the agent. Result: 80% ticket resolution within the first week.

Mastermind needed an AI agent capable of resolving customer conversations end to end, without human handoffs. Maven's FDEs configured the system to understand Mastermind's specific workflows and customer patterns. Result: 93% of chats now handled by AI without requiring a human handoff.

In both cases, the AI technology was not the differentiating factor. The differentiating factor was the engineering work done to make that technology function reliably inside a real operational environment. That is precisely what Forward Deployed Engineers are built to do.

What FDEs Cannot Fix

This is worth being direct about. Forward Deployed Engineers solve the deployment execution layer. They cannot fix AI projects that are missing the organizational conditions for success.

If a project has no clearly defined success metrics before build starts, FDEs cannot manufacture the measurement framework after the fact. If executive sponsorship collapses six months in, FDEs cannot sustain a project that the business has decided not to prioritize. If an organization is deploying AI to solve a problem that nobody in the business actually cares about, the best deployment engineering in the world will not produce meaningful adoption.

The 84% leadership-driven failure rate is real and it represents a problem that sits above the FDE's scope. FDEs are most effective when the strategy and sponsorship are in place, and the barrier is the technical execution of making AI work inside a real enterprise environment. That is the specific layer they own.

Why This Matters for Engineers Considering the FDE Path

The 80% AI failure rate is not a threat to engineers who understand enterprise deployment. It is a career signal.

Companies that are failing to get AI into production are not looking for more model builders. They already have models. They are looking for engineers who can take a working AI system and make it work inside a complex, messy, legacy-laden enterprise environment. That skill set is rare, and demand for it is growing faster than supply.

FDE job postings grew 800% between January and September 2025 according to data from Indeed and the Financial Times. Salesforce has committed to building a team of 1,000 FDEs. OpenAI expanded its FDE team to 50 engineers within the first year of launching the function. Every major AI company is now building this capacity because they have all hit the same wall: the AI is ready, but the deployment is hard.

The engineers who build expertise in deployment thinking, integration engineering, and customer-facing technical problem solving are entering a market where demand significantly outpaces supply. That is a durable career position, not a trend.

For a detailed breakdown of which companies are actively hiring FDEs in India and globally right now, that coverage is in a separate article in this cluster.

Building Skills for Real-World AI Deployment

The skills required to make AI systems work in real-world environments such as integration engineering, production debugging, infrastructure understanding, and working with customer systems are rarely taught in traditional software engineering education.

Most engineers learn these capabilities gradually through experience across multiple roles and projects. However, as demand for deployment-oriented engineering increases, more structured learning paths are emerging to accelerate this transition.

Platforms like FDE Academy focus specifically on this layer of engineering. Instead of only covering model development or theoretical concepts, the emphasis is on how systems behave in production, how integrations are built and maintained, and how engineers can operate effectively within complex enterprise environments.

This type of training reflects the reality of modern AI systems, where success depends less on building the model and more on making it work reliably in real-world conditions.

TL;DR

- Most AI projects fail not because of bad models, but due to poor real-world execution

- Enterprise environments introduce messy data, legacy systems, and integration challenges

- The biggest gaps are data readiness, system integration, and lack of ownership in production

- Forward Deployed Engineers (FDEs) solve this by working directly in customer environments

- They own deployment, integration, and real-world performance of AI systems

- As AI adoption grows, demand for FDEs is increasing rapidly

Frequently Asked Questions

What percentage of AI projects fail?

Multiple authoritative sources converge on a failure rate between 73% and 95% depending on how failure is defined. RAND Corporation found an 80.3% overall failure rate. MIT's NANDA initiative found 95% of generative AI pilots fail to deliver measurable P&L impact. McKinsey's 2026 survey puts the ROI failure rate at 73%. The figures vary because they measure different things, but the direction is consistent: most enterprise AI investments do not deliver their intended value.

What are the most common reasons AI projects fail?

The most frequently cited failure causes are poor data readiness, the gap between pilot and production environments, lack of integration with existing enterprise systems, absence of clear success metrics before the project starts, and loss of executive sponsorship during execution. Gartner predicts 60% of AI projects will be abandoned through 2026 specifically due to organizations not having AI-ready data. Integration failure, where AI models are built in isolation and never properly connected to real business workflows, is a consistent cause across industries.

What is the difference between an AI pilot failing and an AI project failing?

A pilot failure means the proof of concept did not demonstrate sufficient value to justify moving forward. A project failure means the initiative reached production or near-production and still failed to deliver measurable business value. S&P Global found that only 48% of AI projects make it into production at all, meaning roughly half fail at or before the pilot stage. Of those that do reach production, many still fail to generate the ROI that justified the investment.

How do Forward Deployed Engineers help prevent AI project failures?

Forward Deployed Engineers address the execution layer of AI failure. They work directly inside customer environments to build the integration layer between AI systems and real enterprise infrastructure, own production deployment outcomes rather than handing off after setup, debug issues that only appear when AI meets real data and real workflows, and feed learnings back to the product team. FDEs are accountable for whether AI actually works in production, not just whether it was technically delivered.

Can Forward Deployed Engineers fix all types of AI project failures?

No. FDEs solve the deployment and integration execution layer. They cannot fix AI projects that lack executive sponsorship, have no defined success metrics, or are solving a problem the business does not actually care about. Research suggests 84% of AI failures have a leadership or governance root cause. FDEs are most effective when the strategy and sponsorship are in place but the technical execution of making AI work inside a real enterprise environment is the barrier.

Is the Forward Deployed Engineer role a good career path given the AI failure rate?

The high AI failure rate is one of the strongest arguments for pursuing the FDE career path. Companies struggling to make AI work in production are not looking for more model builders. They need engineers who can bridge the gap between a working AI system and a working AI deployment inside a complex enterprise environment. FDE job postings grew 800% between January and September 2025, and companies like Salesforce have committed to building teams of 1,000 FDEs. The demand is a direct response to the execution gap causing so many AI projects to fail.

Become one of India’s first Forward-Deployed Engineers.

The world is hiring - and this Academy prepares you for it.